CHI Student Design Competition top 4 finalist out of 62 entries, May 2016. Read more

AN IN-CASE-OF-EMERGENCY SOLUTION FOR THE DEAF

LifeKey is a custom keyboard that facilitates communication between the Deaf and emergency personnel. This project originated from a partnership between the Pittsburgh Regional Health Literacy Coalition, Pennsylvania Hearing & Deaf Services, and the Carnegie Mellon School of Design. We were tasked with developing a tool that would improve health literacy among Deaf individuals.

Client: Regional Health Literacy Coalition, Center for Hearing & Deaf Services

Audience: Deaf and Hard-of-Hearing individuals

My Role: Client management, ideation, storyboarding, prototyping

Duration: Sep - Dec 2016 (4 months)

User Groups

Challenge

Emergency situations are stressful for anyone. Deaf individuals are more likely to have trouble communicating with emergency operators, and with the emergency personnel who arrive on-scene. The capability to SMS texting to emergency services has become more prevalent worldwide, but limitations still exist: time delays, language literacy issues, and, most importantly, the lack of the comforting voice of an emergency operator walking texters through the process.

Solution

LifeKey is a custom smartphone keyboard and corresponding app that facilitates communication between Deaf individuals and emergency personnel. The LifeKey keyboard consolidates information resources and communication tools to make texting to emergency services faster, easier, and more comforting. The LifeKey app assists with communication between Deaf individuals and emergency responders on-scene.

We began with a focus on Deaf health literacy.

We combed existing literature on Deaf culture and healthcare access.

We began by conducting research into Deaf culture and healthcare access to find opportunities. Our research resulted in two key design implications:

American Sign Language ≠ written/spoken English: The grammar and syntax of American Sign Language (ASL) and English differ significantly; this frequently results in language literacy issues when reading and writing in written English. A viable tool needed to be very visual-based, with minimal text.

Find the in-betweens: Deaf individuals have the right to an interpretation tool in healthcare situations, as per the Americans with Disabilities Act. Deaf access to interpreters is strong; therefore, it would be more impactful to focus on situations where an interpreter is not present.

We interviewed 360 degrees.

We interviewed 9 Deaf individuals, 1 Hard-of-Hearing individual, employees of the Center for Hearing & Deaf Services, and a doctor who has a Deaf patient. To facilitate interviewing, we created a participatory design tool that asked interviewees to provide quantitative and qualitative feedback on their interactions with healthcare professionals. We used affinity diagramming to synthesize results.

We narrowed to emergency situations for the most impact.

We found that for many of the Deaf people we spoke to, emergency situations were their most negative healthcare experiences. Participants struggled with contacting emergency services, summarizing the situation to emergency responders, and understanding emergency responders' explanations, all without the assistance of an interpreter.

“When an ambulance came to treat my daughter when she was having seizures, I had no idea what they were saying.”

We learned about the other side.

We observed the Allegheny County Emergency Services (ACES) busy call center to learn what information and workflows 9-1-1 operators used for their response. Our insights from the 9-1-1 center emphasized the need to create a solution that works within the existing 9-1-1 technology stack, and introduced us to text-to-911.

We analyzed existing solutions.

We conducted a competitive analysis of 15 existing solutions designed to assist Deaf people in emergency situations. We found a lack of tools that both helped during the entire emergency and also took advantage of Deaf access to technology.

We ideated and user tested many ideas.

We speed-dated concept cards.

We brainstormed design ideas to address our mission statement. We discussed design concept cards with Deaf individuals, experts from the Pittsburgh Regional Health Literacy Coalition, and our ACES contact. We used these conversations to filter by feasibility and impact.

We storyboarded ideas in context.

Once we chose our final concept, we used storyboards to illustrate how our tool could be used in context using our persona Jim, who reacts to a fire in the middle of the night using the LifeKey keyboard and app.

We prototyped at several fidelities.

We followed an iterative process of prototyping. We incorporated feedback after each iteration: for example, our contact at the 9-1-1 call center emphasized their need for knowing an emergency’s location as the top priority, so we added the ability to send location by default. Based on user testing with our Deaf contacts, we expanded the proposed solution to include a communication tool for when responders are on scene.

See the interactive prototype: https://projects.invisionapp.com/share/Y55AJ8ODJ#/screens

FINAL SOLUTION

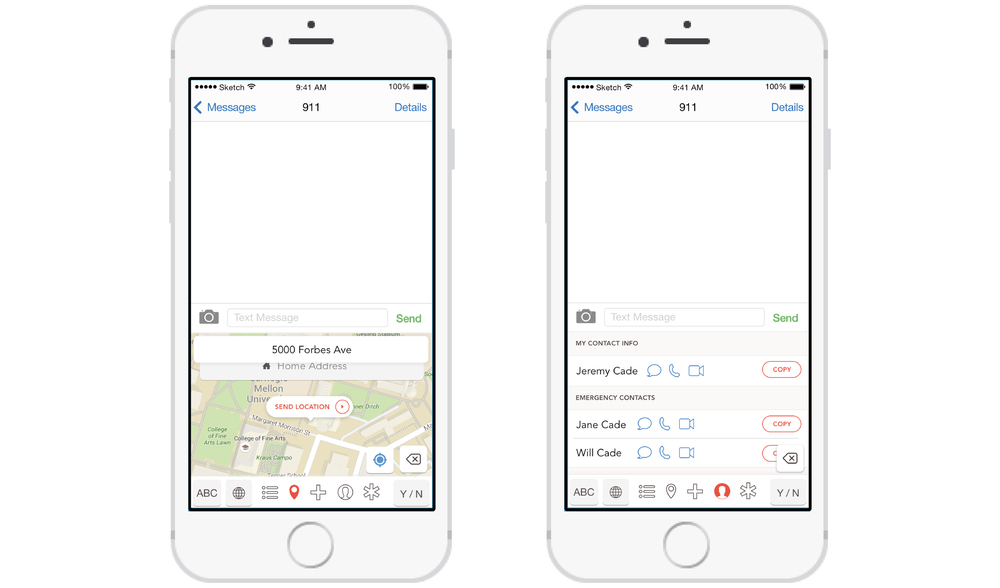

Custom Keyboard

ACCESS WITHIN SMS MESSAGING

The keyboard can be accessed directly from within the SMS messaging app; users don’t need to find another app during a stressful situation. This also ensures that emergency call operators receive the metadata information needed for their response.

QUICKLY SUMMARIZE EMERGENCY

A few taps are all that is needed to quickly explain the emergency situation. Relevant options are chosen based on analysis of emergency calls. This design minimizes typing and accommodates any language literacy issues.

INCORPORATE OTHER APP INFO

LifeKey pulls in information that emergency call operators commonly ask for, so that users can easily send this information without exiting the SMS conversation. Detailed location, emergency contact info, and personal medical information can all be accessed and shared from within the keyboard.

REFERENCE MEDICAL VISUALIZATIONS

A visual-aided medical reference displays common first-aid procedures to complement the medical instructions an emergency call operator may give via text. The keyboard incorporates the user’s In Case of Emergency (ICE) medical information to communicate to emergency personnel.

Mobile App

iOS keyboards are required to have a corresponding app. The LifeKey app can be launched via the keyboard, and is meant to facilitate in-person conversation when emergency responders arrive on scene. The app transcribes responders’ spoken words, and speaks aloud when the user types a reply. The app shows the previous text-message conversation, making it easy to bring responders up-to-speed with the situation. The app also includes medical reference and In Case of Emergency (‘ICE’) information, as well as setup and customization options.

LESSONS LEARNED

Lesson #1: Deal with uncertainty.

When we first started the project, our scope was quite broad: just 'something to improve health literacy in the Deaf community' -- it could have been analog or digital. Through the process of finding a problem and then ideating on a solution, I came to handle and even enjoy this large amount of uncertainty. This lesson has helped me considerably in my career.

Lesson #2: It can be uncomfortable when you don't understand a culture: deal with that too.

Before this project I had no connection with the Deaf community, and only one of our teammates came in speaking (moderate) sign language. I'll admit that when we did our first interview, we came in with a subconscious mentality of 'tell us about the problems in your healthcare experience that you must have as a Deaf person.' We soon realized that that approach was offensive, and would lead us to no research results. Through changing our mentality, and using a participatory design tool to talk about their healthcare experiences neutrally, we were able to find pain points in a culturally sensitive way.